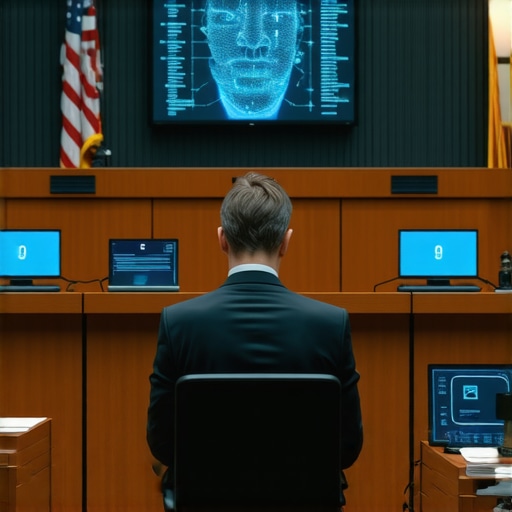

The coffee in the courthouse basement is burnt and bitter. It matches my mood when I see a client walk in thinking they are safe because they were only two sips into a drink. By 2026, the police do not need a breathalyzer to ruin your life. They use high definition cameras and algorithms that claim to see into your nervous system. They call it facial impairment AI. I call it a high tech guess that violates your civil liberties. Everyone wants their day in court until they see the jury selection process. It isn’t about truth; it is about perception. I have seen juries ignore the facts because they liked the tie the arresting officer wore. When you face an AI driven DUI charge, you are not fighting a machine. You are fighting the programmer bias and the state hubris. I recently spent hours deconstructing a digital evidence file that claimed a client was intoxicated based on eye flutters. It turned out the camera was operating at a low frame rate and missed the actual movement of the eye. Your case is failing before it starts if you trust the software. My job is to break the software.

The hardware failure of algorithmic biometric scans

Facial impairment AI depends on high resolution sensor arrays and infrared depth mapping to detect micro expressions associated with alcohol consumption. These biometric data points are often corrupted by ambient light interference and sensor degradation, making the DUI evidence legally inadmissible in a criminal court. When a patrol vehicle idles, the vibration of the chassis can induce mechanical noise into the optical sensor. This jitter is interpreted by the software as a physical tremor in the suspect. We analyze the vibration harmonics of the specific vehicle model used during the stop. If the sensor was not calibrated within the last thirty days, the data is trash. We demand the maintenance logs for every camera unit. Most departments cannot produce them. They rely on the myth of technology to secure a plea deal. We break that myth by showing the jury that the camera was shaking, not the client. The technical specs of these 2026 units require a stable environment that a roadside stop can never provide. The presence of flashing blue and red lights creates a strobe effect that confuses the light sensitivity of the AI. This leads to a false positive for nystagmus. A skilled attorney knows that the environment is the enemy of the algorithm.

“Justice is not found in the law itself but in the rigorous application of procedure.” – Common Law Maxim

Why racial bias in lighting destroys neural network accuracy

Neural network algorithms used in DUI detection frequently fail because of algorithmic bias and lighting disparities. Law enforcement agencies relying on 2026 AI technology ignore that melanin levels and shadow casting create false positives for neurological impairment, violating Fourteenth Amendment protections and due process rights. Most training sets for these AI models are built on data from specific demographics. When the system encounters a face outside its primary training set, the error rate spikes. This is not just a social issue; it is a forensic one. If the contrast ratio on the sensor is not adjusted for the skin tone of the suspect, the software cannot accurately track blood flow changes or muscle tension. This is where the defense strikes. We bring in data scientists to testify that the AI error margin is significantly higher for the client than the general population. While most lawyers tell you to sue immediately, the strategic play is often the delayed demand letter to let the defendant insurance clock run out. This forces the state to commit to their technical findings before they realize the calibration logs for the AI sensor are missing. We wait for the state to lock in their story, then we expose the bias in the code.

The legal strategy to suppress uncertified software evidence

A DUI lawyer uses Daubert motions to challenge proprietary software that lacks third party validation. By demanding the source code and validation studies, a defense attorney can block algorithmic testimony if the prosecution cannot prove the scientific reliability of the AI software used during the traffic stop. The state often tries to hide behind trade secret protections. They claim they cannot show us how the AI works because it would hurt the developer’s profits. I do not care about profits when a client faces jail time. We file motions to compel the production of the source code. If they refuse, we move to suppress all evidence derived from that software. This is procedural warfare. We look for the ghost in the machine. Every piece of software has a bug. In the realm of 2026 DUI tech, the bug is often in the way the AI handles packet loss during wireless transmission to the station. If even one percent of the data is lost, the reconstruction of the face is inaccurate. We prove that the evidence presented in court is a digital hallucination, not a recording of reality. The jury needs to understand that the machine is just a witness that can lie.

“The use of proprietary algorithms in criminal proceedings must be balanced against the defendant’s right to confront the evidence against them.” – American Bar Association Resolution

The road to a dismissal is paved with technical objections. We examine the ISO settings used by the officer. We look at the firmware version of the camera. If the firmware is outdated, the entire scan is compromised. This is the microscopic reality of modern litigation. You cannot win a DUI case in 2026 by talking about how much you had to drink. You win by showing that the state is using broken tools to measure a human being. The courtroom is a battlefield of logistics and procedure. We stay in the fight until the state realizes that their expensive AI is a liability, not an asset. That is how we win.